What’s new for September 2023?

[Test Data] Test Data Pro

Test data will never be the same.

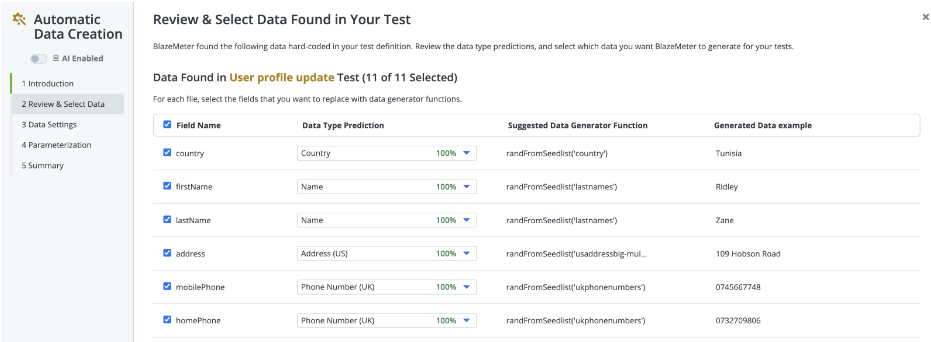

Tired of spending days manually managing test data? There’s a better way. Introducing BlazeMeter’s Test Data Pro – infused with the power of AI to eliminate major testing bottlenecks.

Test Data Pro assists you with new functionality such as:

✅ AI-Driven Data Profiler — Eliminate time-consuming manual test data aggregation by using robust, AI-driven automation. Maintain data integrity across tests for consistent, reliable results while expanding existing data sets with ease.

✅ AI-Driven Test Data Creator — Harness the power of AI to optimize your test data. Achieve comprehensive test coverage with unprecedented data diversity and seed list capabilities – generate names, cities, companies, colors, and more, in seconds.

✅ AI-Assisted Test Data Function Generator — Spend far less time building test data functions by using an AI-Assisted Function Generator. Use natural language to write functions without needing coding experience or memorizing argument names.

✅ Chaos Testing — Uncover hidden vulnerabilities by stress testing with “bad data” - invalid credit card numbers, malformatted dates, etc. Maximize resilience and uptime with automated negative testing.

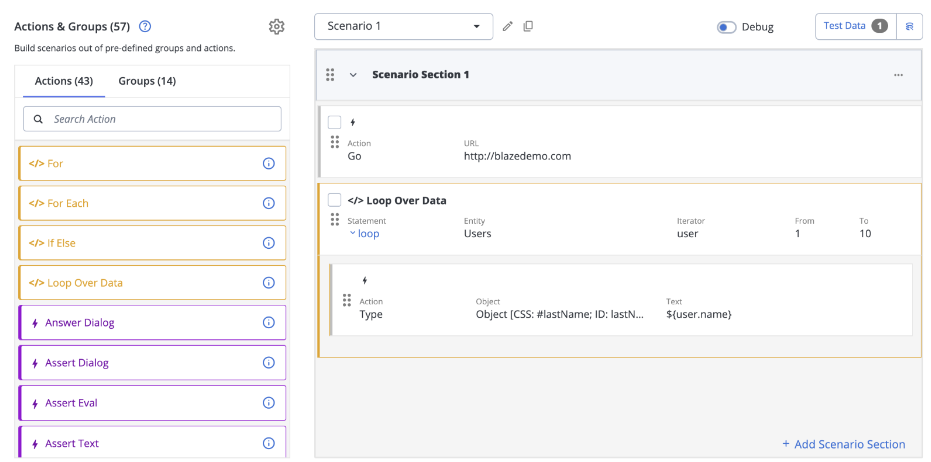

[Test Data] Run data-driven iterations in GUI Scriptless tests

In certain scenarios, it is not practical or needed to loop the entire Scriptless test over the data set, but you want certain actions to loop over the data. BlazeMeter GUI Functional Scriptless tests introduce a new “Loop over data” action which achieves exactly that – one or more actions or action groups inside the loop repeat using the data. The loop will iterate over the test data rows, making sure the test data values are taken one by one.

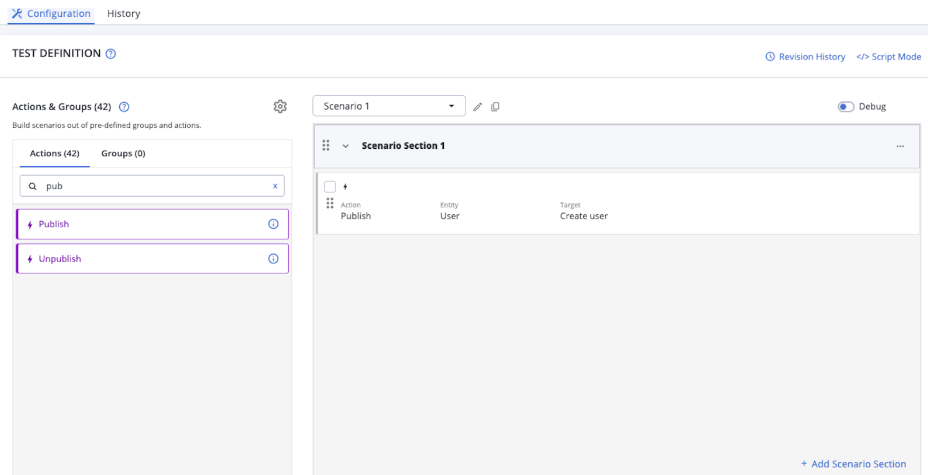

[Test Data] Run Test Data Orchestration from a GUI Functional test action

Test Data Orchestration is automatically triggered at the beginning of the test execution and at the end – to prepare and clean up test data within the test environment. That applies to both Functional and Performance tests. But in a Scriptless Functional scenario that may run various test iterations for each test data row, there may be a need to prepare or clean up the test environment even for each iteration. Therefore, a new Taurus action has been introduced to execute Test Data Orchestration targets at any point in Scriptless test scenarios, in addition to the default execution before and after the entire test.

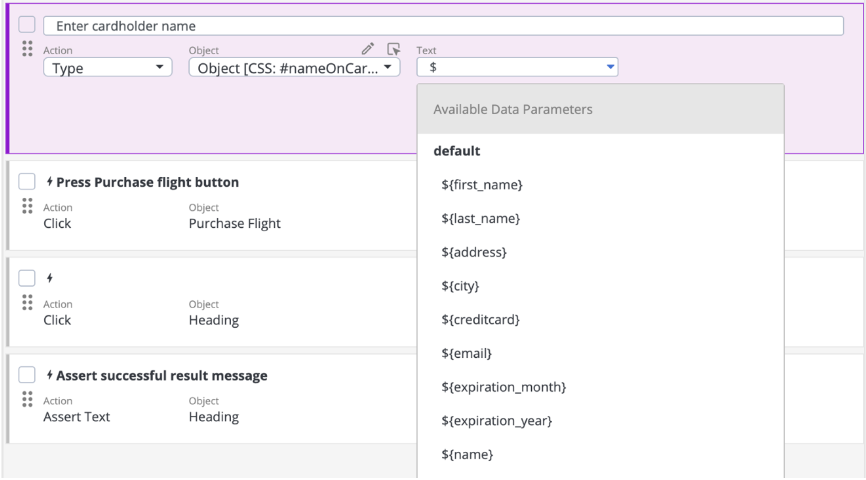

[Test Data] Test Data parameter suggestions in the GUI Scriptless test editor

Imagine you have a CSV file, or synthetic test data definitions attached to your GUI Scriptless test, a pretty common scenario. But until now, you had to either type data parameters from memory, or copy and paste them into your Scriptless actions manually – which is tedious and error prone. Now, certain Scriptless actions like “Type” or “Select” display autosuggestions for available parameters directly in the Scriptless editor – simply pick the right one from the list.

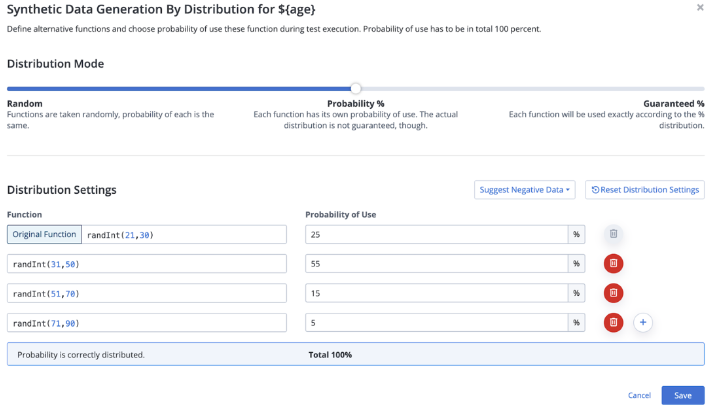

[Test Data] Test Data generation according to specific statistical distributions

One of the common use-cases in synthetic data generation is to be able to generate data according to specific distributions – for example, a certain portion of users within a test scenario should be from the US and another certain portion from Europe. The BlazeMeter Test Data integration now lets you generate data according to random distributions, weighted percentage probabilities, or with guaranteed percentage distributions.

[Performance] Java 17 is now availble

Java 17 has been added to the list of supported Java versions and can now be used together with the latest capabilities of various executors.

[API Monitoring] Provide Certificate via local filesystem

Based on feedback from customers, certain organizations are implementing policies that prevent or limit the storing of TLS certificates in cloud environments. Customers are looking for ways to provide certificates for on-premise executions. While this could be achieved with BlazeMeter Private Locations, it was not implementable through the API Monitoring Radar agent. We have introduced new CLI parameters to refer to certificates stored on the local file system of the machine where the agent is running. This way, the certificate never leaves the premises' boundaries and is used for API Test executions via an on-premise agent.

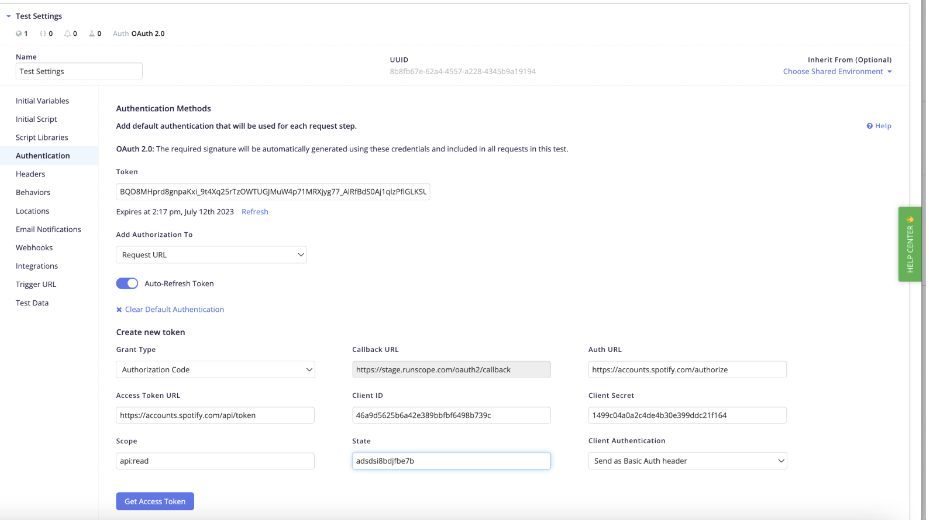

[API Monitoring] Built-in support for OAuth 2.0 authorization

API Monitoring has been providing built-in support for various API authorization methods and schemes: basic authorization, certificate authorization, and OAuth 1.0. As OAuth 2.0 has become the established API authorization standard, API Monitoring now supports OAuth 2.0 with a convenient setup where users can provide OAuth 2.0 tokens directly or generate them. Added capabilities include automatic refreshing of expired tokens by using credentials from the API provider. OAuth 2.0 support is now available, together with the other authorization methods, on both environment- and step-level settings.

[API Monitoring] Preserve “skip” setting when the test is duplicated

API Monitoring test definitions lets you selectively skip certain test steps. They are still part of the test definition, but they are not considered during the execution. However, when users duplicated the test, the “skip” setting was not preserved – and this led to unexpected invalid test executions when something expected to be skipped was not. API Monitoring now preserves the step skip setting at test duplication.

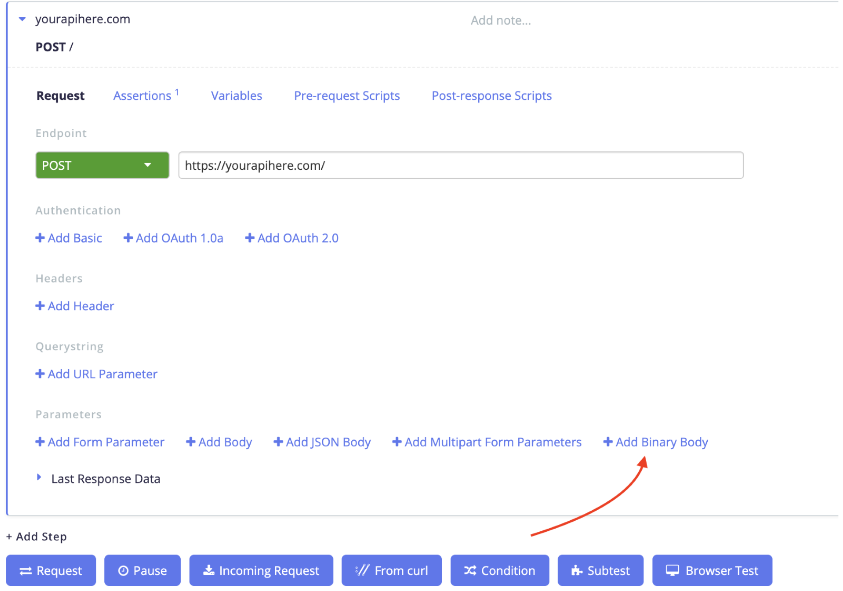

[API Monitoring] Support for binary content in request body

API Monitoring now natively supports sending of raw binary content in the request body for POST, PUT, and PATCH requests. The new “Add Binary Body” option allows to provide an uploaded file as the request body and the binary data read from the file is then properly send over HTTP. This way, API Monitoring fully supports testing scenarios like image uploads, media uploads, imports of compressed data, etc. Also the process of retrieving binary data from GET responses and passing them to subsequent API requests has been improved to avoid unnecesary base64 encoding.