What’s new for October 2023?

What’s new for October 2023?

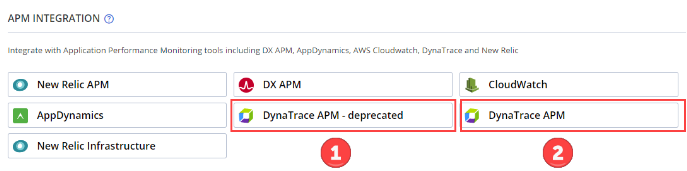

[Performance] New Dynatrace integration

Dynatrace is an Application Performance Monitoring (APM) tool designed for analyzing the performance of application servers, database servers, and web services.

Because Dynatrace has deprecated their v1 APIs with Dynatrace version 1.243, and replaced it with v2 APIs, we have created a new Dynatrace integration to accommodate this change.

BlazeMeter now includes two versions of the Dynatrace APM integration:

If you are using a Dynatrace version that is 1.243 or higher, use the Dynatrace APM integration.

If you are using a Dynatrace version that is lower than 1.243, use the Dynatrace APM – deprecated integration.

[Mock Services]: Reordering of processing actions

After creating multiple processing actions, when the user decides to reorder them which means to change the order in which those processing actions are called can now be done. A simple intuitive way by which the processing actions can be reordered by simple drag and drop action. This enables customers to reorder the processing actions and decide the order in which those processing actions are called.

[Mock Services]: Analytics updates

The request and response body are now clearly shown in the Analytics view for each Mock Service. This is true for all the processing actions as well which enables the customers to see what requests were made and what responses were received as a part of not only the transaction but also the processing actions. Another change made is to also show the time at the seconds level as well. This is particularly helpful when a series of processing calls are made one after the other and this enables customers to know what calls were made and at what time.

[Mock Services]: Import/Export Services enhancements

When importing an already exported Service, all the transactions within the Service(s) including all the processing actions are imported as well. We recommend our customers to export (backup) their Services periodically. In the scenario that a single Service or multiple Services are accidentally deleted, the exported backup can be used to import the lost transactions.

[Auto Correlation Recorder]: Upload Correlation Rule Templates directly from JMeter to BlazeMeter

Saving time just got easier! With the latest ACR plugin, you can now upload correlation rule templates directly to BlazeMeter with one click. No more saving locally and uploading separately.

This enhancement streamlines the correlation template workflow allowing you to go from JMeter to BlazeMeter in seconds. Now you can focus on creating templates rather than hassling with a multi-step process.

Say goodbye to the two-step upload grind. This new seamless experience lets you instantly access your templates across JMeter and BlazeMeter. Spend less time managing templates and more time innovating your tests.

[Performance]: BlazeMeter integration with Datadog APM now available

With Datadog APM becoming a notable APM in the industry and reflecting on its importance and value to our esteemed customers, we’ve taken a proactive step forward.

In the first version of the integration, users can expect:

- Comprehensive Performance Overview: Like other APM integrations, linking Datdog offers insights into both application and infrastructure performance. This occurs both during and after load testing. It empowers users with the capability to view specific Datadog metrics (like CPU, memory, and storage) right within the Timeline report, complemented by BlazeMeter’s own metrics.

- Effortless Account Connection: Simply input your Datadog credentials in BlazeMeter to establish the link.

- Customizable Metric Selection: Design a Datadog profile specifying which metrics are pertinent during the load test.

- Smooth Test Integration: Seamlessly blend your Datadog setup into your performance tests.

- Enhanced Report Insight: The Timeline report now furnishes a well-rounded view, encompassing both Datadog and BlazeMeter performance metrics.

Sliding Window Feature: Enhancing Shift Left/Test Automation Failure Criteria

In traditional testing scenarios, the assessment of failure criteria was typically done after the completion of the test. With the introduction of the Sliding Window Feature, we’re offering a more efficient approach.

What to expect:

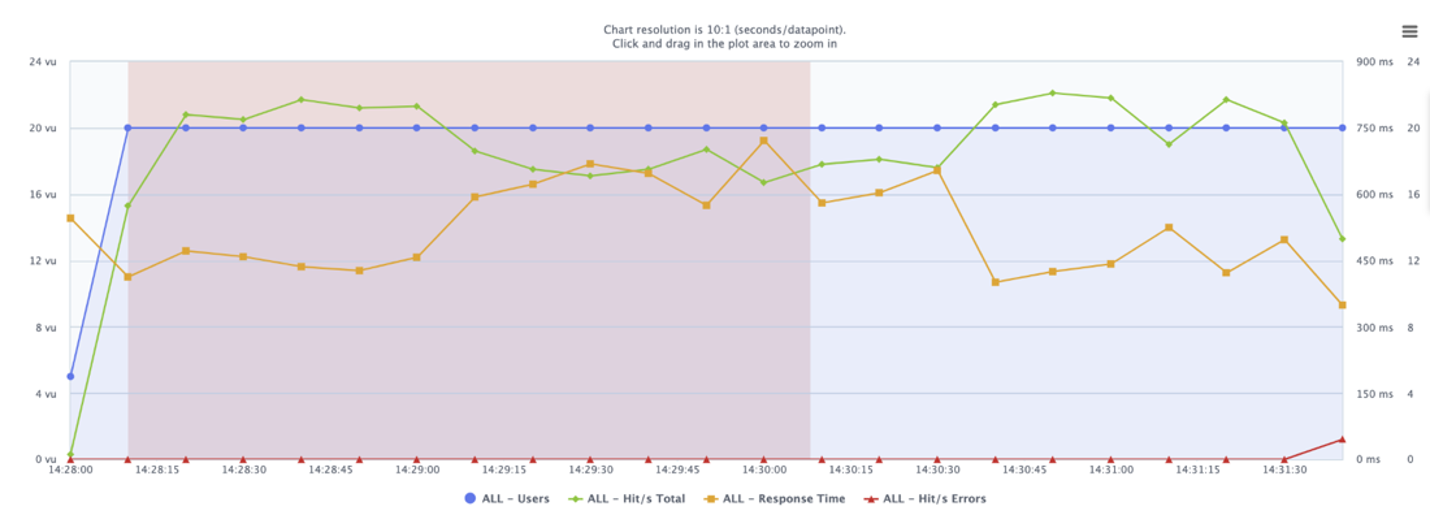

Continuous Monitoring: Rather than evaluating only at the end of the test, the Sliding Window method checks throughout the test duration. If you’ve set failure criteria, such as an average response time exceeding 500, BlazeMeter monitors the performance in real-time, every minute.

- Segmented Analysis: At specified intervals, like at minute 01:00, BlazeMeter evaluates the performance of the preceding minute, such as 00:00-01:00. This process is repeated at subsequent intervals.

- Immediate Violation Detection: If the designated threshold is exceeded at any checkpoint, BlazeMeter promptly flags it as a ‘Violation’.

- Clear Reporting: In the event of violations, the Timeline report highlights these periods with a distinct red rectangle, making it easier to identify and investigate areas of concern.

The Sliding Window feature offers a more proactive approach to test evaluations, providing insights sooner and more frequently.

BlazeMeter’s support for Taurus’ “Stop” & “Timeframe” commands

BlazeMeter has added support for Taurus’ “timeframe” and “stop”/”continue” pass/fail commands. These are now integrated with our “sliding window” and “stop” failure criteria configurations. Here’s what this means for you:

- Automated CI/CD Process: This integration allows a more automated CI/CD process, complementing our shift left approach.

- Efficient Testing: Tests can be terminated early when they fail, especially if initiated through Taurus CLI. This saves both time and resources.

In the past, if you ran Taurus in the cloud via BlazeMeter, the “Stop” command was not recognized. This meant that the test would continue to run if a failure was detected. With this update, when using Taurus in the cloud, the “Stop” command is respected. This applies whether you are running a test from Taurus CLI or using yaml script directly in BlazeMeter.

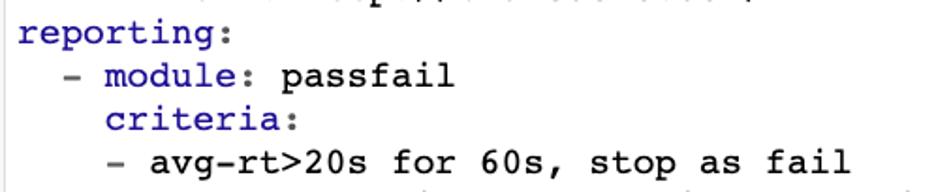

To use the stop function, the pass/fail module needs to specify the timeframe “for 1 minute” and include a stop command. Here are some examples of pass/fail modules that stop tests if the average response time exceeds 20 seconds:

- avg-rt>20s for 1m, stop as fail

- avg-rt>20s for 60s, stop as failed

- avg-rt>20s for 1m, stop

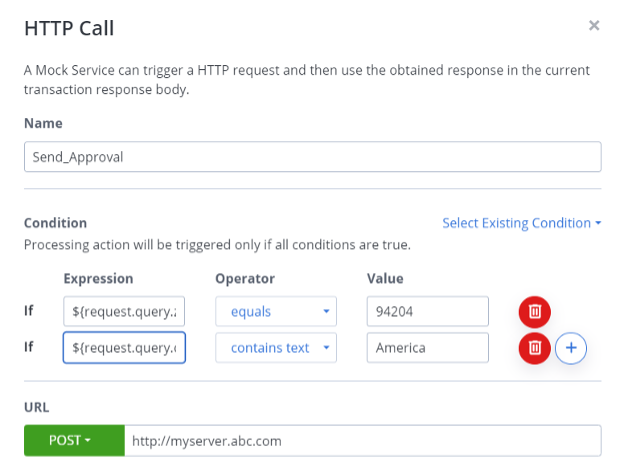

[Mock Services]: Conditional routing logic for processing actions

Processing actions now include the ability to be fired based on conditional logic. When a transaction is matched, the first processing action is evaluated based on the optional conditional logic. The condition contains an expression that is validated against a value with the help of the selected operator. If multiple conditions exist, then all must be true for the processing action to fire.

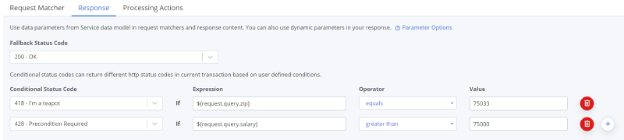

[Mock Services]: Ability to set response status dynamically

The Mock Service response status code can now be set dynamically. This is done by specifying the logic for conditional status codes. Expressions are evaluated against the specified values which determines the response to be sent. When these conditional status codes are evaluated and none of them match, then the Fallback Status Code is sent back.

[Mock Services]: Specifying an alias and password SSL calls

For SSL connections, when a keystore is used, it generally contains multiple certificates. When the HTTPs call is being made, customers can provide the alias of the certificate which defines the correct certificate to be used for the current SSL call. For this feature to be used, the most important criterion is that the keystore should have aliases defined for certificates. Multiple certs are shown from an existing keystore from which the user can select the appropriate cert for the call.