Blog

May 27, 2022

The practice of performance testing has been undergoing a makeover in the past few years. Agile development teams are adopting DevOps practices, including performance baseline tests: more automation, more observability, and utilizing quality gates with more accurate testing, which are all contributing to improved decision making regarding our releases.

When testing, it might boil down to the question – should I release this new version while my performance test indicated degradation of performance? A performance baseline can help.

What is a Performance Baseline?

A Performance Baseline will be the performance criteria that the business accepts for the app or service in question. It’s also referred to as the Service Level Agreement (SLA) in performance testing. The different criteria which will be measured and enforced are the Service Level Objectives (SLO).

The baseline is a fixed starting point for comparison of future tests against it, serving the purpose of monitoring the app for stability, identifying regressions, and enforcing the business SLA. Without it, the performance/non-functional testing team has no reference to measure against either during the release of a new version or before critical events.

Back to topWhat is a Performance Baseline Test?

A baseline test is a performance test that shows that the App-Under-Test is performing within the acceptable ranges of the SLOs, when it is tested with a specific load and scenario that simulates real-world behavior. That test will be the baseline test, and from now until a new baseline will be chosen, every future instance of that test will be compared against that baseline.

Who defines the SLOs and SLAs in performance testing? Typically, it will fall under the Product Management’s responsibility to decide. The testers should incorporate those SLOs, i.e those criteria that have been agreed on, into their test. This ensures it will be enforced and compared with whenever we’re running the same test again.

Back to topWhat are the Benefits of Having SLAs in Performance Testing?

Without a baseline test or SLA in performance testing, you can run a test many times and get proper reports, but without meaningful results. Using BlazeMeter’s Failure Criteria to fail the test when a certain Service Level Indicator (SLI) has breached the SLO, e.g. Median response time over X Seconds, is very helpful in failing the test earlier when it presents sub-par performance. Failure Criteria are even more accurate and contextual if you could fail a test based on the baseline’s SLIs as the criteria.

BlazeMeter also lets you set quality gates in a much more accurate way than before. Think of a test that runs on a certain cadence as part of your pipeline. Imagine how impactful it is to identify performance degradation at this early stage, way before any end-to-end tests are executed.

Back to topConfiguring Your Performance Baseline Tests in BlazeMeter

How to Create Your First Baseline Test

It’s a new app, a new test and you haven’t set a report as the Baseline yet. If you have a good guess of your target app’s limits, feel free to configure the test accordingly. However, if you are relying on the first test to shed some light on your baseline criteria, the best way to go would be to start small and increase the load from there. This can be achieved by starting a multi-test with 10-20% of what you believe might be the 100% that your app can handle, and adding load dynamically on the fly.

How to Set a Report as Your Baseline

Once you run a test and you believe you found your baseline, it’s very easy to set that report as your baseline.

- Go to tests -> select your test. By default, you’ll see the test’s History view.

- Find the report you wish to set as a baseline

- Click on the “Set as Baseline” button on the right. Notice that if you need to choose from multiple reports, and you want more visibility into which report is the one you’re looking for, you can expand each report on the list and see the high level metrics, e.g. Avg Response Time, Avg Throughout, etc., for added context.

2. Via the requests stats report - for an in-depth comparison

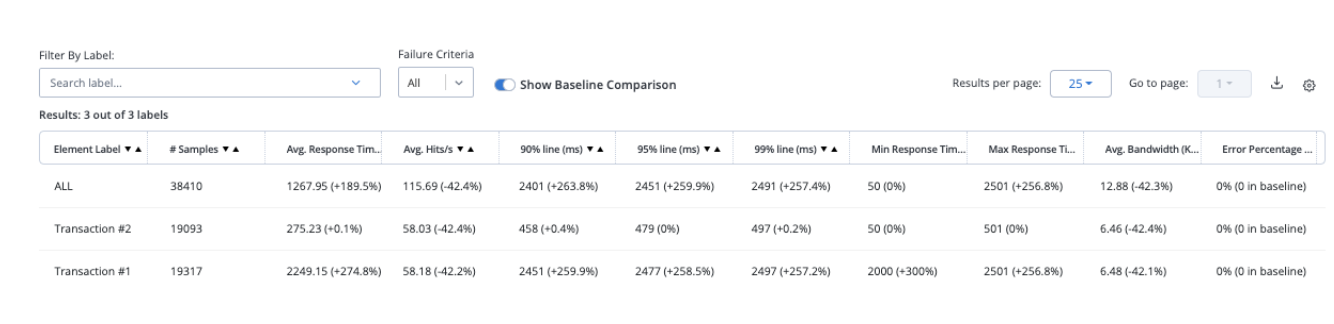

This view enables you to drill down into the results of a test, examining all the different metrics, per label, and their respective baseline measurements.

When observing the Request Stats report, you’ll find a toggle button called ‘Show Baseline Comparison’. Toggle it on. You will see a comparison to the baseline in percentages, next to each metric, per each test label.

3. Via the Compare Reports feature - for a refined comparison

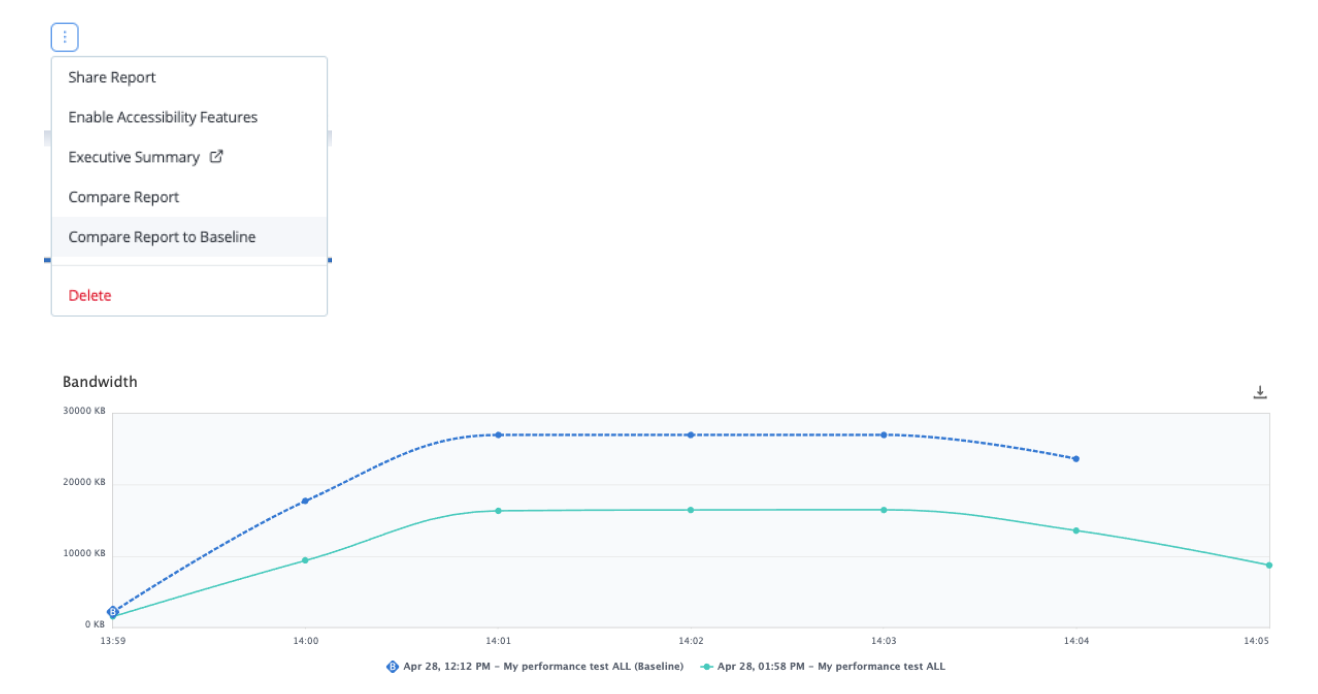

The Compare Reports feature is not new, but we did set up a quick shortcut to get your current report compared to the baseline. You can now see a comparison between the current report, and the baseline report, per Metric, You can also refine the comparison to be based on a certain label.

- When viewing the report, click on the vertical ellipsis next to the report’s name

- Select the ‘Compare Report to Baseline’ option

- Click on ‘Compare’

- To compare per label, select the labels you want in the respective drop down menu at the top.

That’s it! BlazeMeter enables you to provide better service to your customers by enforcing SLAs built-in to your performance test.